ISO 42001 Gap Analysis Everything You Need to Check Against the Full Standard.

Why Start with a Gap Analysis? (And Why This Guide is Different)

Most organizations jump straight into implementation and waste months (and money) fixing things they didn’t know were missing.

A professional ISO 42001 gap analysis is the smartest first step. It shows you exactly:

- Where you already comply

- Where your biggest risks and weaknesses are

- How much effort (time, budget, people) certification will actually take

This guide is not a short checklist. It is a complete, expanded, self-contained reference that walks you through the entire ISO/IEC 42001:2023 standard in simple language.

You can read it in one sitting, print it, or save it as a PDF. Every section includes:

- What the clause actually requires

- Real-world questions to ask yourself

- Typical gaps seen in Indian organizations

- Evidence you will need for certification

- Practical next steps

Let’s begin.

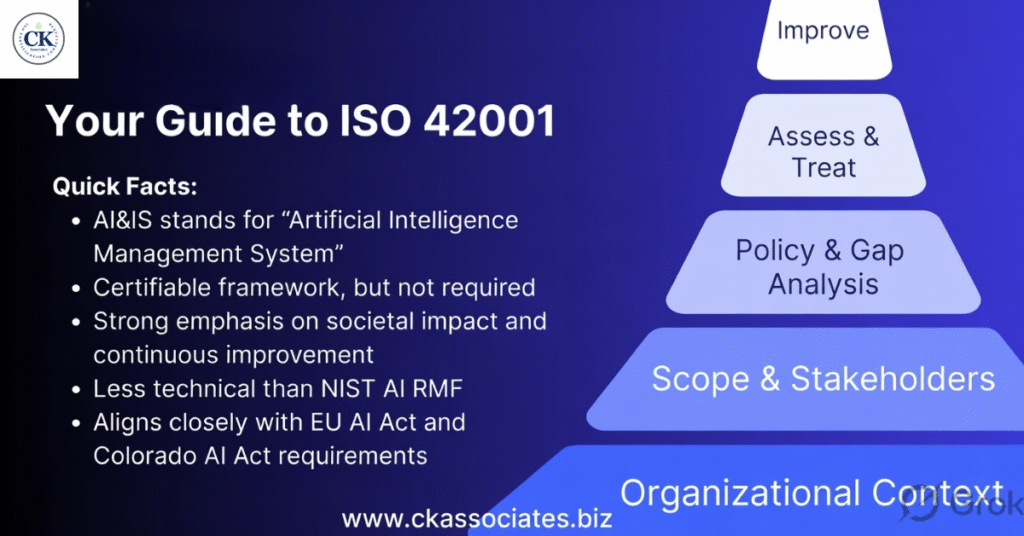

Quick Overview of ISO 42001 Structure

The standard follows the same high-level structure as ISO 27001:

- Clauses 1–3: Scope, references, and definitions (not audited heavily)

- Clauses 4–10: The 7 auditable requirements of your Artificial Intelligence Management System (AIMS)

- Annex A: 38 specific controls (normative – you must consider every one)

Below is the full gap analysis.

Clause 4: Context of the Organization

What the standard requires You must understand internal & external issues that affect your AI systems, identify interested parties and their needs, and clearly define the scope of your AIMS.

Gap Analysis Questions (Self-Check)

- Have you listed internal factors (culture, resources, existing policies) and external factors (laws, market trends, EU AI Act, Indian regulations)?

- Do you know what your customers, regulators, employees, suppliers, and investors expect from your AI?

- Is the scope of your AIMS documented? (Which AI systems are in/out? Which departments? Which lifecycle stages?)

Common Gaps

- Scope is too vague (“all AI we use”) → auditors reject it.

- No analysis of external AI regulations relevant to India or export clients.

- Interested parties identified but their specific AI-related expectations not documented.

Evidence Needed

- Documented “Context of the Organization” register

- List of interested parties + their requirements

- Approved AIMS Scope Statement

Action to Close the Gap Create a one-page Context Register and review it every 6 months.

Clause 5: Leadership

What the standard requires Top management must show visible commitment, establish an AI policy, assign roles/responsibilities, and ensure the AIMS is integrated into business strategy.

Gap Analysis Questions

- Does the CEO/MD personally approve the AI policy?

- Are clear roles (AI Governance Lead, Risk Owner, Ethics Officer, etc.) documented and communicated?

- Is leadership actively involved in AI risk reviews?

Common Gaps

- Policy exists but is written by IT only – no top-management sign-off.

- Roles are assumed (“everyone knows”) instead of documented.

- No evidence that leadership reviews AIMS performance.

Evidence Needed

- Signed AI Policy

- RACI matrix or job descriptions showing AI responsibilities

- Minutes of management reviews that cover AI

Action Get CEO sign-off on the AI Policy this quarter.

Clause 6: Planning

What the standard requires Identify AI risks & opportunities, perform AI Impact Assessments (AIIA) for high-risk systems, set measurable AI objectives, and plan how to achieve them.

Gap Analysis Questions

- Have you conducted a formal AI risk assessment (bias, privacy, security, safety, societal impact)?

- Do you have AI Impact Assessments for high-risk use cases?

- Are AI objectives SMART and linked to business goals?

Common Gaps

- Only generic risks identified – no AI-specific ones (hallucination, bias amplification, third-party model risks).

- No process for AIIA.

- Objectives are missing or not measurable.

Evidence Needed

- AI Risk Register (with likelihood, impact, treatment)

- Documented AI Impact Assessment reports

- AI Objectives & Plans

Action Run a workshop to build your first AI Risk Register.

Clause 7: Support

What the standard requires Provide resources, ensure competence, raise awareness, communicate internally/externally, and control documented information.

Gap Analysis Questions

- Do people working on AI have the right skills/training?

- Is there AI-specific awareness training?

- Are all AIMS documents version-controlled?

Common Gaps

- No training records for AI ethics or risk assessment.

- Documents scattered across drives with no version control.

- Communication plan missing for third parties.

Evidence Needed

- Competence matrix + training records

- Documented information procedure

- Awareness training attendance

Action Roll out mandatory “Responsible AI” training for all AI-involved staff.

Clause 8: Operation

What the standard requires Implement the processes needed to achieve AI objectives, manage the entire AI system lifecycle, apply Annex A controls, and handle third-party relationships.

Gap Analysis Questions

- Do you have documented processes for design → development → deployment → monitoring → retirement of AI systems?

- Are all applicable Annex A controls actually implemented and operating?

Common Gaps

- Lifecycle processes exist informally but are not documented.

- No operational controls for model monitoring or incident response.

- Third-party AI providers (OpenAI, Google, etc.) not assessed.

Evidence Needed

- AI Lifecycle Procedure

- Records of operational controls being used

Action Map your current AI development pipeline against the lifecycle requirements.

Clause 9: Performance Evaluation

What the standard requires Monitor, measure, analyze, and evaluate the AIMS performance. Conduct internal audits and management reviews.

Gap Analysis Questions

- Do you have KPIs for AI governance effectiveness?

- Have you scheduled internal audits of the AIMS?

- Does top management review AIMS performance at planned intervals?

Common Gaps

- No metrics (e.g., % of AI systems with impact assessments completed).

- No internal audit program yet.

- Management review happens for ISO 27001 but not for AI.

Evidence Needed

- Monitoring & measurement records

- Internal audit reports

- Management review minutes (with AI agenda)

Action Define 5–7 simple AI governance KPIs today.

Clause 10: Improvement

What the standard requires Identify nonconformities, take corrective action, and continually improve the AIMS.

Gap Analysis Questions

- Do you have a process for handling AI-related incidents or complaints?

- Is there evidence of continual improvement?

Common Gaps

- No formal nonconformity & corrective action process for AI.

- Lessons learned from past AI failures are not fed back into the system.

Evidence Needed

- Nonconformity register + corrective action records

- Evidence of improvements made

Action Add “AI incidents” to your existing incident management process.

Annex A Controls – Full Coverage Summary

Annex A is normative – you must evaluate every control and implement those that apply (or justify exclusions).

Here are the 9 Control Objectives with key controls explained:

| Objective | Key Controls | What It Means (Simple Explanation) | Common Gap |

|---|---|---|---|

| A.2 Policies related to AI | AI Policy, Alignment with other policies | High-level direction for responsible AI | Policy is generic, not AI-specific |

| A.3 Internal organization | Roles & responsibilities, Reporting concerns | Clear accountability + whistleblower process | Roles not documented |

| A.4 Resources for AI systems | Resource allocation & documentation | Enough people, tools, budget for AI governance | Under-resourced ethics function |

| A.5 Assessing impacts of AI systems | AI Impact Assessment process | Formal evaluation of societal, ethical, legal impacts | No AIIA process |

| A.6 AI system life cycle | Design, development, verification, validation, deployment | Responsible practices at every stage | Ad-hoc development only |

| A.7 Data for AI systems | Data quality, bias mitigation, privacy | High-quality, ethical training data | Poor data governance |

| A.8 Information for interested parties | Transparency & communication | Explain AI decisions to users/stakeholders | Black-box systems |

| A.9 Use of AI systems | Responsible use, human oversight | Controls when AI is in production | No monitoring after deployment |

| A.10 Third-party & customer relationships | Supplier assessment, customer expectations | Manage external AI providers & client needs | Unvetted third-party models |

Pro Tip: Create a Statement of Applicability (SoA) table that lists every one of the 38 controls, your decision (apply/exclude), justification, and implementation status.

How to Use This Guide Right Now (Step-by-Step)

- Read each clause above.

- Score yourself: Compliant / Partial / Not Compliant.

- Note evidence you already have.

- List actions in a simple tracker (Excel/Google Sheet).

- Prioritize high-risk gaps first.

Download Tip: Copy this entire blog into a Word document, add your company logo, and turn it into your official Gap Analysis Report.

Ready to Move from Gap Analysis to Certification?

CK Associates has already helped 10+ organizations in Hyderabad, Telangana, Andhra Pradesh, and across India complete their ISO 42001 gap analysis and achieve certification.

Our Gap Analysis Package includes:

- 2-day on-site/virtual workshop

- Detailed report with prioritized actions

- Custom templates for Scope, Risk Register, AI Policy, SoA, etc.

- Roadmap with exact timelines and costs

Typical outcome: Organizations discover 60–70% of requirements are new and close the gaps in 3–6 months.

Contact us today for a free 30-minute scoping call and we’ll show you exactly what your gap analysis would look like.

Frequently Asked Questions

Q: How long does a proper gap analysis take? A: 1–2 weeks for most mid-sized companies.

Q: Is this guide enough or do I still need a consultant? A: This guide is excellent for self-assessment and small teams. For faster, audit-ready results, professional help is recommended.

Q: Does it cover the latest 2023 version? A: Yes – fully aligned with ISO/IEC 42001:2023 requirements as of 2026.